Most user research doesn’t inform strategy. It confirms bias. Product teams run surveys, collect responses, and walk away with the answers they were looking for. They conduct interviews and ask leading questions. They build personas from demographic data and call it “user-centric design.” Then they wonder why their carefully researched product misses the mark.

The problem isn’t that user research is broken. The problem is that most teams use research to validate decisions they’ve already made, rather than to challenge assumptions they haven’t yet questioned.

Real user research — the kind that changes your strategic decisions — is uncomfortable. It surfaces things you didn’t want to know. It contradicts your roadmap. It forces you to rethink your positioning. And that is precisely why it is so valuable.

This article is about the research methods that actually reveal strategic insights: the approaches that go beyond surface-level feedback to uncover the motivations, frustrations, and unmet needs that should be shaping your user-centric vision. It is also about how to synthesise what you learn into decisions that matter — not just incremental feature tweaks, but strategic pivots grounded in evidence.

Why Traditional Research Often Fails to Drive Strategy

The most common form of user research in product teams is the post-release survey. You ship a feature, send a satisfaction survey, and collect Net Promoter Scores. This tells you whether users liked what you built. It tells you almost nothing about what you should build next.

There are three structural reasons why traditional research fails at the strategic level.

First, it asks the wrong questions. Surveys optimised for satisfaction measure how users feel about existing features, not what problems remain unsolved. You learn whether users are happy with your current product, not whether your current product is solving the right problem.

Second, it recruits the wrong users. Most research is conducted with existing, engaged users — the people who already love your product. These users are systematically different from the users you haven’t yet won, the churned users who left, and the non-users who never tried you. Strategic insights often live in those populations, not in your fan base.

Third, it asks users to predict their own behaviour. Users are notoriously unreliable at predicting what they will do. They say they want feature X, but when you build it, they don’t use it. They say they would pay more, but when you raise prices, they churn. Behavioural research — observing what users actually do — is almost always more revealing than attitudinal research — asking users what they think.

Strategic user research corrects for all three of these failure modes. It asks open, exploratory questions. It recruits diverse users, including non-users and churned users. And it prioritises observation over self-report.

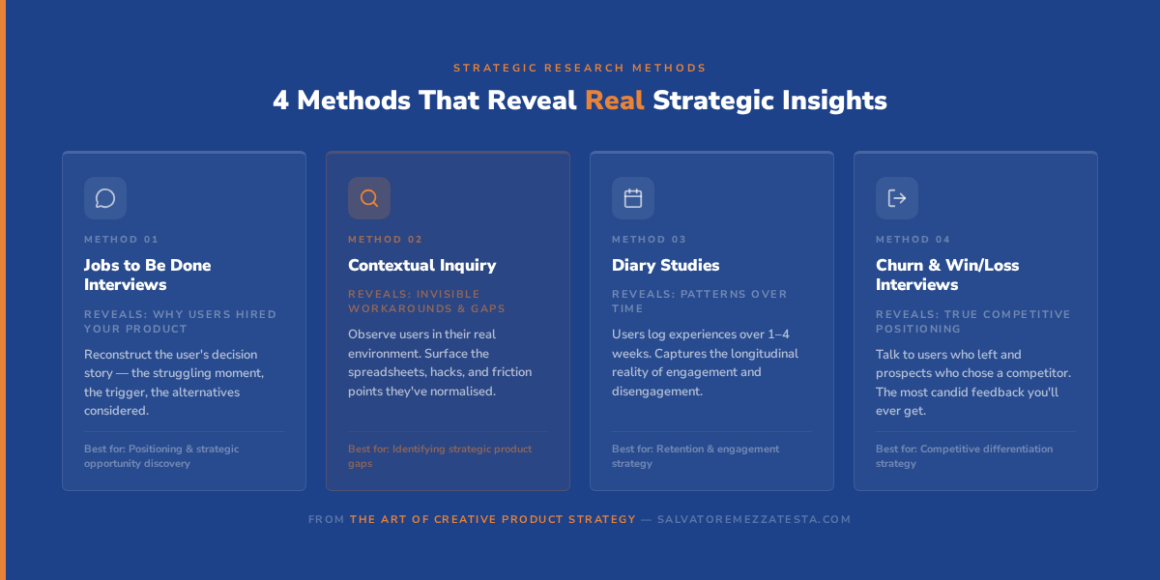

Research Methods That Actually Reveal Strategic Insights

Jobs to Be Done Interviews

The Jobs to Be Done (JTBD) framework, developed by Clayton Christensen and refined by Bob Moesta at The Re-Wired Group, is one of the most powerful lenses for strategic user research. The core insight is simple: users don’t buy products — they hire them to do a job. Understanding the job gives you a far more durable strategic insight than understanding the user’s demographics or preferences.

A JTBD interview focuses on a specific moment of purchase or adoption. You ask the user to walk you through the story of how they came to use your product: what was happening in their life, what triggered the search, what alternatives they considered, and what made them choose you. You are not asking about features. You are reconstructing a decision.

The strategic gold is in the “struggling moment” — the specific context in which the user felt enough pain to seek a solution. If ten users describe the same struggling moment, you have identified a strategic opportunity. If the struggling moments are all different, you have a segmentation problem. For a deeper introduction to the methodology, JTBD.info is an excellent starting point.

Contextual Inquiry

Contextual inquiry is a field research method in which you observe users in their natural environment — at their desk, in their workflow, in the context where your product is (or should be) used. Unlike lab-based usability testing, contextual inquiry captures the messy reality of how users actually work: the workarounds they’ve built, the tools they use alongside yours, the interruptions that break their flow.

The strategic value of contextual inquiry is that it surfaces the invisible. Users rarely articulate the workarounds they’ve normalised. They don’t mention the spreadsheet they maintain alongside your product because they’ve forgotten it’s a workaround — it’s just how they work. Observing that spreadsheet tells you more about your product’s strategic gaps than any survey question.

Contextual inquiry is time-intensive, but even three to five sessions with representative users can fundamentally change your understanding of the problem space. Tools like UserTesting make it possible to run remote contextual sessions at scale, reducing the logistical burden considerably.

Diary Studies

A diary study asks users to record their experiences with a product or problem over an extended period — typically one to four weeks. Participants make short entries (text, voice, or video) at regular intervals or triggered by specific events. The result is a longitudinal picture of how users experience your product in the context of their lives, not in the artificial context of a research session.

Diary studies are particularly valuable for products that are used intermittently, or where the strategic question involves patterns over time. If you are trying to understand why users disengage after the first week, a diary study will tell you far more than an exit survey.

Churn and Win/Loss Interviews

The most underused research method in product strategy is the conversation with users who left. Churned users have already made a decision — they voted with their feet — and they are often willing to tell you exactly why, with a candour that current users rarely offer.

Win/loss interviews (borrowed from sales) apply the same logic to acquisition: why did a prospect choose you, and why did another prospect choose a competitor? These conversations reveal your true competitive positioning — not the positioning you claim, but the positioning that exists in the minds of buyers.

Both types of interview should be conducted by someone who was not involved in the sales or retention process. Neutrality is essential. The goal is to understand the decision, not to re-sell.

Personas and Journey Maps: Done Right

Personas and journey maps are among the most commonly misused tools in user research. Done poorly, they are decorative artefacts — colourful posters on the wall that no one consults when making decisions. Done well, they are living strategic documents that anchor every product decision in a specific human reality.

Personas done right are built from research, not from assumptions. They are grounded in patterns observed across multiple users — recurring goals, recurring frustrations, recurring behaviours. They are not demographic profiles (age, gender, job title) but behavioural profiles (motivations, mental models, decision-making patterns). And they are updated when research reveals that the pattern has changed.

The most useful persona is not the most detailed one. It is the one that creates the sharpest strategic tension. If your persona is so broad that every feature serves them, it is not a strategic tool but a permission slip. A useful persona forces trade-offs: building for this user means not building for that user. That tension is where strategy lives. The Nielsen Norman Group’s guidance on persona creation remains the most rigorous publicly available resource on this topic.

Journey maps done right map the experience of a specific persona through a specific job, from the moment of need recognition to the moment of resolution. They capture not just the steps in the process but the emotional experience at each step: the moments of friction, confusion, delight, and abandonment.

The strategic value of a journey map is in the gaps: the moments where your product is absent, where users rely on workarounds, where the experience breaks down. Those gaps are your opportunity space. A journey map that shows a smooth, uninterrupted experience is either a sign of a great product or a sign of a superficial map.

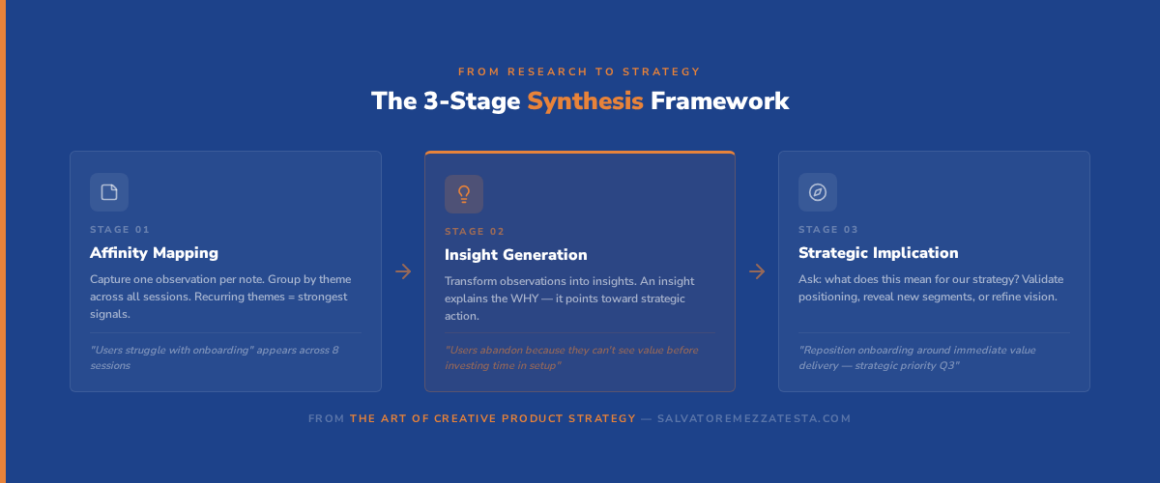

How to Synthesise Research into Strategic Decisions

Collecting research is the easy part. Synthesising it into strategic decisions is where most teams struggle.

The fundamental challenge is moving from individual stories to strategic patterns. A single user’s frustration is an anecdote. Ten users sharing the same frustration is a signal. Thirty users describing the same struggling moment is a strategic imperative.

The synthesis process has three stages.

Stage 1: Affinity mapping. After each research session, capture observations as individual notes (one observation per note). Group notes by theme across sessions. Themes that recur across multiple users and multiple research methods are your strongest signals.

Stage 2: Insight generation. An insight is not an observation. “Users find the onboarding confusing” is an observation. “Users abandon onboarding because they cannot see the value of the product before they are asked to invest time in setup” is an insight. Insights explain the why behind the what. They point toward strategic action.

Stage 3: Strategic implication. For each insight, ask: “What does this mean for our strategy?” Does it validate your current positioning? Does it reveal an unaddressed segment? Does it suggest a different user-centric vision than the one you have articulated? The strategic implication is the bridge between research and decision.

This three-stage process is covered in depth in Module 5 of The Art of Creative Product Strategy, alongside worksheets and worked examples.

Case Study: How Spotify Uses User Research for Strategy

Spotify’s product strategy is widely recognised as one of the most user-centric in the industry. But what makes it distinctive is not the volume of research Spotify conducts but how research is integrated into strategic decision-making at every level.

Spotify’s approach to user research is built around three principles that are worth examining for any product team.

1. Research as a continuous practice, not a project

Spotify embeds researchers within product squads rather than centralising them in a separate research function. This means research happens continuously, in parallel with development, rather than as a gate before or after a project. Strategic decisions are informed by fresh research, not by a six-month-old study. The Spotify Engineering Blog has documented aspects of this approach in detail.

2. Quantitative and qualitative in dialogue

Spotify’s scale gives them access to extraordinary behavioural data — listening patterns, skip rates, playlist behaviour, session length. But they use qualitative research to interpret that data. When the data shows that users skip a certain type of recommendation, qualitative research reveals why. The combination produces insights that neither method could generate alone.

3. Research that challenges strategy, not just validates it

Spotify has a documented culture of using research to challenge product assumptions. When Spotify was considering its podcast strategy, internal research revealed that users did not think of Spotify as a podcast platform but as a music platform. That insight could have been used to argue against podcasts. Instead, it shaped the acquisition strategy (Anchor, Gimlet) and the product integration approach. Research informed the strategic bet; it did not just validate it.

This approach to embedding research within strategy is precisely what Module 5 of The Art of Creative Product Strategy teaches. If you want to build a research practice that drives strategic thinking rather than just feature decisions, explore the user-centric module here.

Common Pitfalls in User-Centric Strategy

Even teams that invest seriously in user research make predictable mistakes when translating research into strategy.

The loudest user fallacy. The users who respond to surveys, attend focus groups, and send detailed feedback emails are not representative of your user base. They are the most engaged, most opinionated, and often most atypical users you have. Building strategy around their feedback means building for an outlier.

Research as a decision-making substitute. Research should inform decisions, not make them. If your team is paralysed without research, or if every strategic decision requires a new research project, you have a decision-making problem, not a research problem. Research reduces uncertainty; it does not eliminate it.

Ignoring the non-user. The most important strategic insight is often why people are not using your product. Non-users have made a decision — they chose an alternative or chose to do nothing — and that decision contains strategic information. Most teams never talk to non-users.

Optimising for the current user at the expense of the future user. Your current users have adapted to your product as it is. They have built workflows around your limitations. If you only research current users, you will build a product that serves their adapted behaviour, not the behaviour of the users you want to win next.

The Lean UX methodology, developed by Jeff Gothelf and Josh Seiden, offers a practical framework for avoiding many of these pitfalls by embedding research assumptions directly into the development process.

Connecting Research to the Book

Module 5 of The Art of Creative Product Strategy is dedicated entirely to the user-centric approach to product strategy. For teams that want to explore creative solutions to the problems their research uncovers, the next article in this series covers the creativity-feasibility balance — how to turn strategic insights into bold, shippable ideas.

Want the full toolkit?

The Art of Creative Product Strategy covers user research, creative thinking, vision, roadmapping, and execution — everything you need to build products that matter.

Frequently Asked Questions

Q1: What is the difference between user research and market research?

User research focuses on understanding how specific users behave, think, and make decisions. It is qualitative and behavioural in nature, conducted with individual users or small groups. Market research focuses on market size, competitive landscape, and aggregate trends. It is typically quantitative and population-level. Both are essential for product strategy, but they answer different questions. User research tells you what your users need; market research tells you how large the opportunity is.

Q2: How many users do I need to interview for qualitative research?

The standard guidance from the Nielsen Norman Group is that five to eight users are sufficient to identify the majority of usability issues in a qualitative study. For strategic research — where you are looking for patterns across diverse user types — ten to fifteen interviews per user segment is a more reliable threshold. The signal is saturation: when you stop hearing new insights, you have enough data. Quality of recruitment matters far more than quantity of respondents.

Q3: Should we do user research before or after we build?

Both, and continuously. Before you build, research validates your assumptions about the problem and the user. After you build, research reveals how users actually interact with your solution — which is almost always different from how you imagined they would. The most effective teams treat research as a continuous practice embedded in their development process, not as a gate before or after a project.

Q4: How do we avoid confirmation bias in user research?

Confirmation bias is the tendency to seek and interpret evidence in ways that confirm your existing beliefs. To counter it: ask open-ended questions rather than leading ones; recruit users who are likely to challenge your assumptions (churned users, non-users, users of competitor products); have someone who disagrees with your hypothesis conduct or observe the research; and actively look for disconfirming evidence in your synthesis. The goal is to be surprised, not validated.

Q5: What is the difference between a persona and a user segment?

A user segment is a group of users with shared measurable characteristics — demographic, behavioural, or attitudinal. A persona is a detailed, narrative representation of a specific user type, grounded in research and designed to be memorable and actionable. Segments are useful for analysis; personas are useful for decision-making. The best personas are built from segments but go beyond them — they capture the motivations, frustrations, and mental models that drive behaviour.

Q6: How do we use user research to inform strategy rather than just features?

The key is to ask strategic questions, not feature questions. Instead of “What features do you want?”, ask “What problem are you trying to solve?” and “What does success look like for you?” Instead of “How would you rate this feature?”, ask “What would you do if this feature didn’t exist?” Strategic research focuses on the problem space, not the solution space. Features are answers; strategy is about asking the right questions.

Q7: Can we do meaningful user research with a limited budget?

Absolutely. Five to ten direct conversations with users — conducted over video call, at no cost — will reveal more strategic insight than a thousand survey responses. Guerrilla research (intercepting users in context, using social media communities, monitoring forums where users discuss your category) is free and often more revealing than formal research. Budget is not the constraint; commitment to asking uncomfortable questions is.

Q8: How do we handle conflicting user feedback?

Conflicting feedback is a signal, not a problem. It usually means you have multiple distinct user segments with different needs. Your job is not to reconcile the conflict but to understand it: which segment is strategically most important? Which conflict represents a genuine trade-off that your strategy must make? Conflicting feedback is where strategy becomes necessary — it forces you to choose.

Q9: Should users be involved in strategy development?

Users are invaluable for informing and validating strategy, but they should not develop it. Users optimise for their immediate, individual needs; strategists optimise for long-term market position and organisational capability. If you let users write your strategy, you will end up with a feature list, not a vision. Use research to understand the problem space; use strategic thinking to define the solution space.

Q10: How often should we conduct user research?

Continuously, at whatever cadence your team can sustain. Monthly research sessions — even brief ones, even with just two or three users — are more valuable than an annual deep dive. Regular research keeps your team connected to user reality and catches market shifts before they become crises. The goal is not to conduct research; it is to make research a habit.